Cloud I/O for Media Assets

10 key learnings from moving petabytes to AWS, Azure and Google

Cloud technology is proving to be a disruptive force in almost every industry and the major public cloud providers — Amazon, Microsoft, and Google — are actively rolling out innovative new services to capitalize on the opportunity. What started back in 2006 with the launch of Amazon’s Simple Storage Service (S3) to provide easy, scalable storage in the cloud has evolved into a landscape that includes everything from elastic compute to machine learning and artificial intelligence tools, advanced media processing and much more. It’s now possible to build almost any software solution using cloud technology, and the power and breadth of these offerings is only growing. While it’s difficult for any industry to keep up with the rush of innovation, it’s particularly challenging in media and entertainment, an industry that has its own unique requirements.

There are many ways to benefit from cloud technology. From building solutions from scratch to deploying full-stack SaaS products, most media companies already leverage the cloud in some way. In this guide we focus on cloud object storage and the best tools for moving assets into and out of the cloud. In general, media companies are not moving all their assets to cloud storage but many are looking to the cloud to provide elasticity when there is an unexpected surge in storage needs, for backup and disaster recovery, as well as for more complex workflows. While moving assets to the cloud may seem simple on the surface, as with many things in M&E, it’s not as trivial as it sounds. In an industry that deals with huge files, complex supply chains and growing security challenges, there are several factors to consider. Fortunately, there are a wide variety of tools available to move content into and out of cloud object storage including many offered by the cloud providers themselves. Each can be valuable in certain situations, but there are a variety of factors to consider. As Signiant has played a pivotal role in helping thousands of media companies on their cloud journeys, we assembled this guide to highlight key considerations to help you choose the best tools for the job.

Speed

When it comes to moving media assets, speed is a fundamental consideration. In M&E, where deadlines are tight and datasets are measured in gigabytes, terabytes, or even petabytes, standard tools just don’t do the trick. When moving large files over long distances or congested networks, many tools will suffer in performance due to latency and packet loss. The bigger the files, the longer the distance or the more congested the network, the more performance will be impacted, and in some cases, by a factor of 100! As a software provider to customers who move petabytes of content to and from the cloud monthly, we’ve observed huge variances in network congestion at certain times of day with the public cloud providers which, without the right software, can lead to unpredictable performance. And, even in situations where latency isn’t an issue, traditional transfer methods don’t take full advantage of all available bandwidth.

Modern acceleration technology was created for exactly this reason — to help minimize the impact of latency and packet loss and to utilize whatever bandwidth is available. In fact — and this can be somewhat counterintuitive — the more bandwidth available, the more the right acceleration technology will help. Amazon itself offers a service called S3 Acceleration which can help speed up data transfers in AWS workflows. It works by moving assets first to the closest edge server available and then accelerating the transfer from the edge to your AWS region of choice. Even though it can eliminate the distance of the initial transfer into AWS, congestion may still be an issue. That said, S3 Acceleration can be an easy way for developers to speed up workflows they have built using Amazon tools, but it still leaves you building your own custom solution. While that might be necessary in situations with extremely unique requirements, there are out-of-the-box, full-stack products that offer equal or better performance at a much lower total cost of ownership. For most situations, building your own solution simply doesn’t make sense. Unsurprisingly, S3 Acceleration also only works with Amazon storage, another important consideration discussed below.

Reliability

When dealing with large files, network instability and file corruption can cause disruptions and delays. Transfer tools must be able to navigate interruptions and deliver files unaltered, with byte-for-byte accuracy. A capability such as checkpoint restart, where interrupted transfers automatically restart from the point of failure, is a must-have for reliable operations.

Security

Security is a constant concern in media workflows. For companies that may not have the in-house security expertise and appropriate physical security protocols, cloud storage can be more secure than housing files on-premises. But, that is only true if configured properly and only if the transfer tools secure assets in flight. Look for tools with a commitment to enterprise-grade security that take precautions to secure every layer involved in file movement and whose security technology and implementation regularly undergo extensive third-party reviews to ensure effective protection. Security also ties back to working with full-stack products vs. building your own solutions. As you go lower in the cloud stack you have more security controls you need to configure and you need to get those configurations correct. A well-designed SaaS product with the right approach to security will take care of all that for you. If you do choose to store assets in the cloud, do make sure your Amazon S3 buckets, Azure blobs and Google buckets are configured properly. Each cloud provider offers guidelines to help ensure your assets are secured properly once they land in their storage.

Monitoring, Reporting & Alerts

Hand-in-hand with reliability and security is visibility — the ability to view transfers in progress, report on transfer history and receive alerts when transfers are complete or when things go awry. Asking IT resources to dig through log files when there is an exception or to verify a valid transfer is not a scalable solution. Make sure the tools you choose keep all the necessary constituents informed, whether it be finance who needs to invoice a client when content is delivered, or the operations teams that need to know immediately if something went wrong and who may be asked to verify who has accessed a certain file and when. The tools provided by major cloud vendors offer robust logging of events but will leave your teams processing large log files, building your own dashboards and reports, or deploying third-party tools to view the required information.

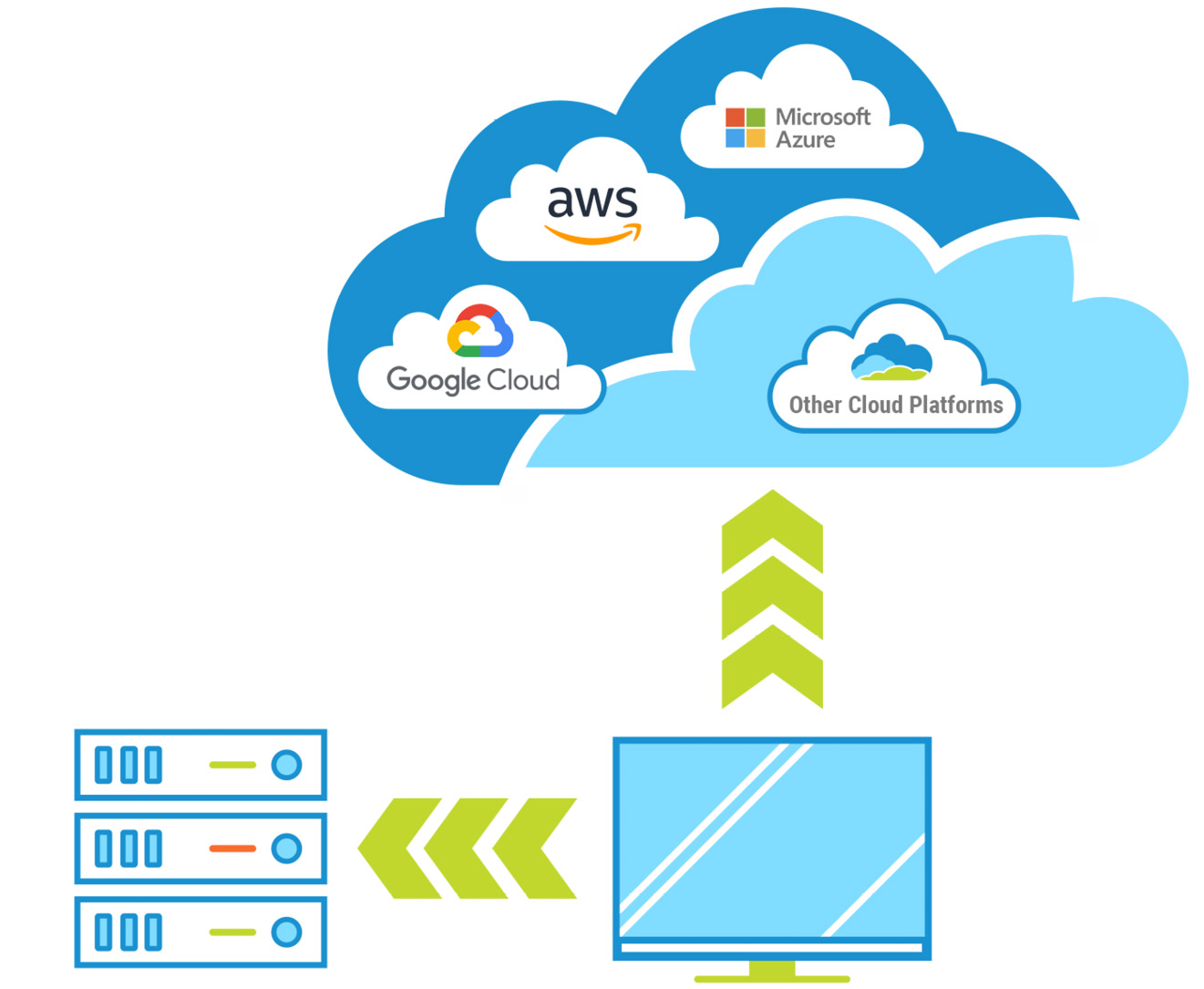

Hybrid Cloud/Multi-cloud

While Amazon remains the dominant player in cloud, Microsoft and Google continue to grow their footprints and each are innovating, nonstop. The cloud wars are on and so it makes sense to maintain the flexibility to work with multiple cloud providers for specific workflows and to be able to easily switch providers as capabilities evolve. And, even with the rapid growth of cloud adoption, most media companies are not moving all their assets to the cloud. Instead, they are maintaining a hybrid strategy with some assets in on-prem storage and others in the cloud, sometimes across multiple providers and regions. This is what Signiant refers to as a hybrid cloud/multi-cloud strategy and it’s a trend that doesn’t appear to be going away.

While the tools offered by cloud providers can be good choices for specific situations, they limit your flexibility. Imagine you went all in with AWS, building a solution with their tools only to learn Google rolls out a new media processing service that you wish to use. Your team would be stuck having to learn a whole new platform and rebuild their software to work with Google. In a world defined by constant change and innovation, it’s important to consider tools that act as an abstraction layer, making it seamless to work with on-prem or cloud storage and across multiple cloud providers.

Working with Multiple Locations

In cases where content is aggregated from multiple producers in different locations, where creative teams are collaborating from locations around the globe, or where content is distributed to multiple platforms, the cloud can be a powerful resource to support those operations. There are certainly cases where all content is moving from a single location to a single cloud provider but that is more the exception than the rule. In such cases where a single location is in play, some companies will opt to deploy a dedicated connection such as AWS Direct Connect or Microsoft ExpressRoute which can offer a more consistent network experience than the public internet. These offerings can be expensive, especially if not fully utilized (see below), and still often require acceleration software to take full advantage of the bandwidth. While dedicated connections have a role in some workflows, consider whether content will flow to and from a single location, what your utilization will be, and if you’re 100% committed to a single cloud provider. In almost all cases, you’ll find there is a more flexible and more cost-effective approach.

Frequency & Utilization

The next question to ask is how frequently and consistently you will be moving data to the cloud. Is this a one-time move, is it an ongoing backup operation with a steady flow of data, or will the flow of data have unpredictable peaks and valleys?

For a large one-time operation where a massive amount of data needs to be moved from an on-prem data center to the cloud, the cloud providers do offer solutions. After all, it’s in their interest to get your content into their platforms. Amazon offers a service called Snowmobile where you can transfer up to 100PB at a time.

They will literally pull up at your data center with a 45-foot-long ruggedized shipping container, pulled by a semi-tractor trailer, and haul your data to their data center. This may be a great solution for that one-time, massive move to Amazon, but it clearly wouldn’t make sense for most operations.

Through our work with many of the world’s top media companies, we’ve observed that estimating utilization is a challenge. More often than not, companies overestimate their intended utilization, leading to unfavorable economics. Services like ExpressRoute from Microsoft and DirectConnect from Amazon are costly when not fully utilized, and it’s rare that operations keep these pipes full 100% of the time.

Flexible software solutions that offer consumption-based pricing will help avoid the utilization conundrum.

User Access & Administration

It goes without saying that the big three public cloud providers offer incredibly powerful tools to work with their storage, but they are targeted at developers building solutions, not end users. In M&E, more so than most industries, people are actively involved throughout every stage of the supply chain. Powerful APIs and command line tools are great for developers, but how do your creative end users easily and securely access the media assets they need? These users require a simple user interface to access media assets that is consistent regardless of whether assets are stored on-prem or in the cloud. In fact, the users shouldn’t need to know (or care) where or how the assets are stored as long as they can quickly access the content they need. Along with that, operations teams need tools to add and remove users, set permissions and control the user experience. Look for tools that offer delegated administration so that operations can easily manage teams, partners and projects without IT intervention and do so regardless of which storage type IT chooses to deploy for a given project.

Automated Transfers

While people are actively involved throughout the supply chain, automation is also critical to many operations. Simple, automated backup and disaster recovery operations are a great way to leverage cloud storage. More distribution workflows are now including cloud, including fan-out distribution where a large asset, such as a DCP, is delivered to multiple places at once. In these cases, the asset is moved first into cloud storage and then is simultaneously delivered to several locations, leveraging the essentially “unlimited” bandwidth of the public cloud providers. Those are just some examples but all throughout the media supply chain are hot folder workflows, scheduled jobs, as well as more sophisticated operations triggered by events coming from many systems. More and more of those workflows include cloud storage somewhere along the way.

Automated workflows should be easy to deploy and manage and should abstract storage so that unattended content exchange can happen between on-prem storage locations and the cloud without having to retool. Historically, automation tools have been complex and prohibitively expensive for most businesses, even large ones. Thankfully, there are now modern SaaS solutions that make it easy and accessible for any business to automate content exchange between all types of storage.

Inter-company Content Exchange

The final consideration in the list, inter-company, is one that is tied closely to automation and is also a growing challenge across the industry. With increasingly complex media supply chains driven by multiple production partners at the front end and multi-platform delivery on the back end, there are simply more companies, big and small, interacting to create and distribute content. With inter-company transfers, each side may not know, need to know, or even care, what type of storage their partner is using. They just need to get the content to the right place in a timely and secure manner. While the basic principles of inter-company content exchange are similar regardless of storage type, cloud storage can introduce some complexity with regard to costs and cost sharing. Each side needs visibility and control of their own systems while keeping their partners out of their networks and storage. If you routinely exchange content with partners, choose tools that work easily with cloud and on-prem storage and that allow for easy and secure setup of inter-company transfer jobs, without sharing passwords and other sensitive information.

“Signiant was the first place we turned,” says Josh Derby, Vice President of Advanced Technology & Development for Discovery, Inc. “For fast file transfer into the cloud, Signiant was the logical choice.”

Solutions That Check All the Boxes for M&E

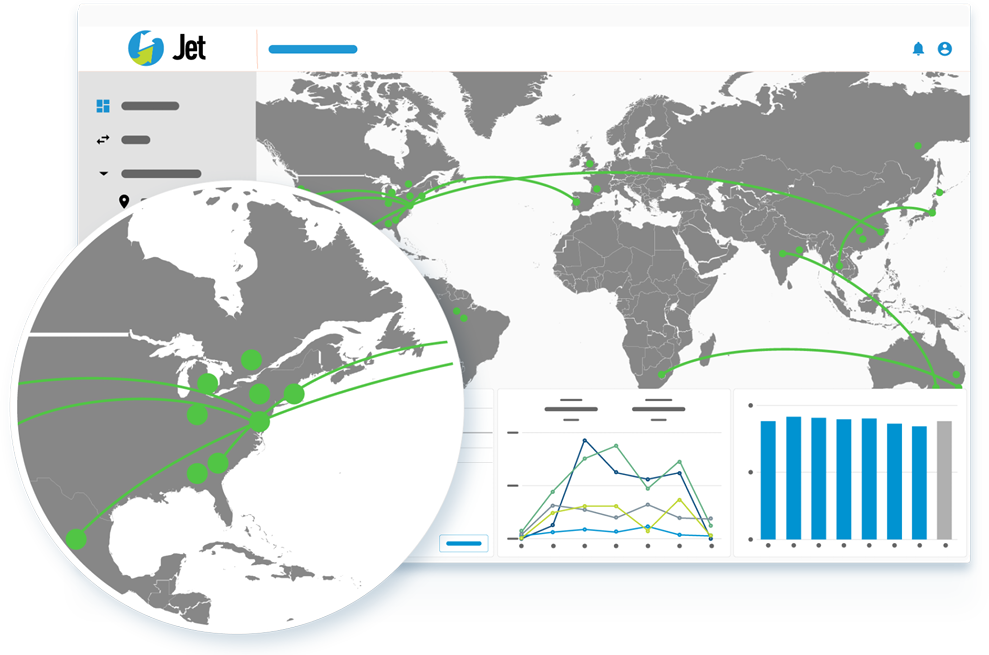

Although the cloud providers offer powerful tools to move content into their platforms, there are full-stack products available that will get media businesses up and running much more quickly and cost effectively. Signiant’s software products have long been trusted by the media industry for mission-critical file transfer applications across the global supply chain. Media Shuttle is the easiest and fastest way for people to send and share large files, anywhere in the world with speed, reliability and security while Jet offers powerfully simple unattended content exchange, within and between companies. Both Media Shuttle and Jet are built on Signiant’s Software-Defined Content Exchange (SDCX) SaaS platform which checks all the boxes when it comes to moving media assets to and from the cloud, or anywhere else for that matter.

- Speed

- Reliability

- Security

- Monitoring, Reporting

- Hybrid Cloud, Multi-Cloud

- Multiple Locations

- Frequency & Utilization

- User Access & Administration

- Automated Transfers

- Inter-Company Support

Moving Media Assets Into and Out of the Cloud

Cloud object storage is a powerful ally to media companies. Whether it’s a simple backup or disaster recovery operation, overflow when there’s an unexpected surge in storage needs or a more complex workflow where assets are required to be in the cloud, every media company should be equipped to leverage the cloud, if and when a need arises. The global media supply chain continues to grow in complexity with more partners in play, a more distributed workforce and a complex storage landscape driven by the hybrid cloud/multi-cloud imperative. That is why Signiant software is relied upon by more than 50,000 media and entertainment companies to create efficiencies, reduce complexity, and offer an on-ramp to the cloud for media companies of all sizes.