Acceleration

Signiant has long been a market leader in advanced transport technology, holding several patents in this area, and our software is relied upon to move petabytes of high-value content every day.

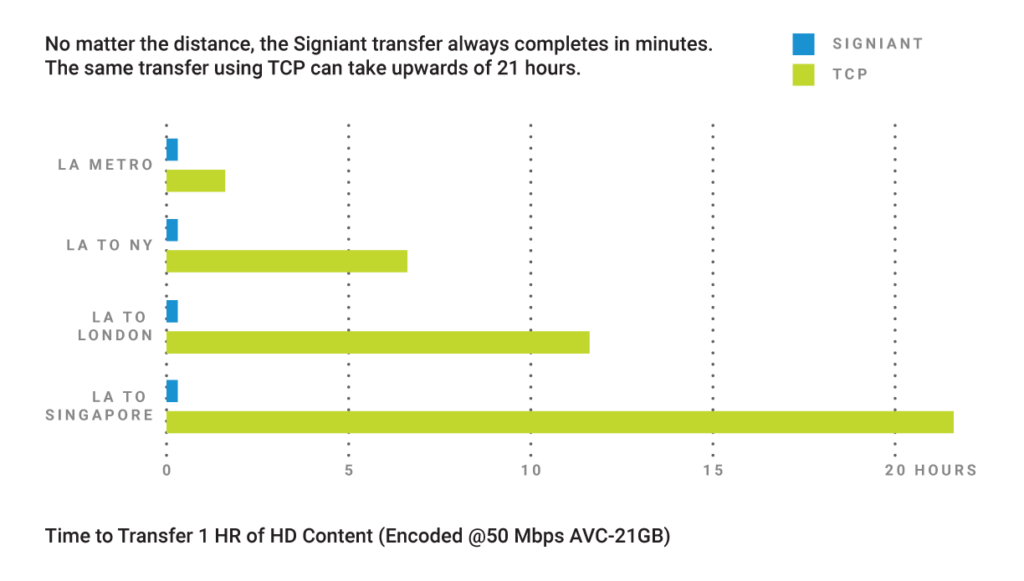

Each of Signiant’s products leverages our proprietary acceleration technology, moving content up to 100x faster than standard Internet transmission speeds. Signiant’s acceleration technology is capable of moving any size file or data set over any IP network, while taking advantage of all available bandwidth.

While our transport technology adds value in many situations, the speed difference is most notable with large data sets, long distances or highly-congested networks and, somewhat counter-intuitively, when there is more bandwidth.

- Signiant’s transport technology eliminates the impact of latency and packet loss

- The entire file is always sent byte for byte, without compression

- Signiant adds Checkpoint Restart on top of its transport technology to automatically restart any interrupted transfer from the point of failure, without losing data

Post Office Films

“It [Media Shuttle] saves us tons of time and helps us to properly communicate with our editors around the globe.”

~Dávid Jancsó, Film Editor

Learn More About the Signiant Platform

The Signiant Platform builds on the foundation of our world-class fast file movement apps and adds workflow-centric production services that make working with media even more efficient.