Scale Out Architecture for the Fastest Data Transfers To and From the Cloud

Signiant Flight’s patented scale out architecture for data transfer is the fastest way to move big data and large files to and from cloud services including cloud object storage (like Amazon S3 and Microsoft Azure). It is especially impactful for global companies with multiple locations around the world that need to ingest or receive files.

Why is scale out architecture so efficient?

A key benefit of Flight’s enhanced scale out architecture is that it can scale a single file transfer across multiple cloud instances. This allows for higher throughput and more consistency with lower cost when compared to a scale up approach.

What’s the difference between scale up and scale out architecture when transferring files from on-premises locations to cloud storage using accelerated file transfer?

Scale Up vs. Scale Out

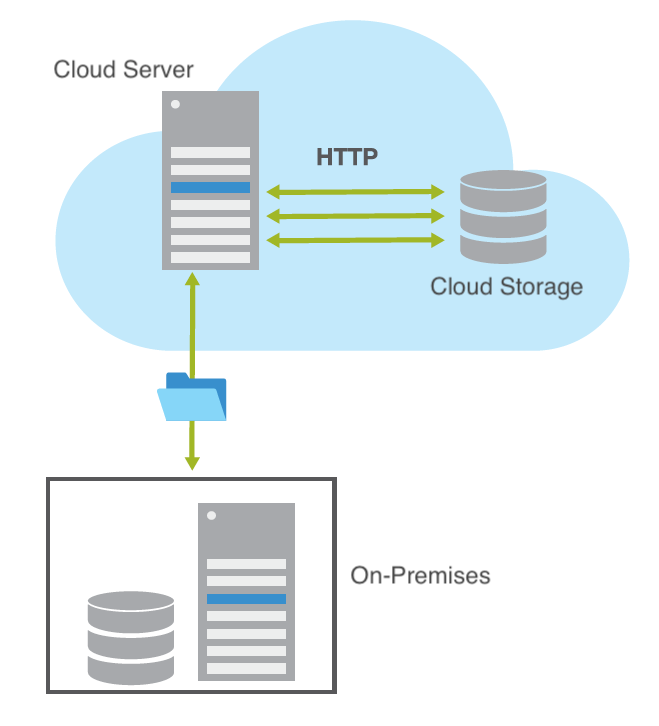

Scale Up Architecture for Accelerated Large File Transfer to the Cloud

In this case, “scale up” refers to using a single large, high-power computer for processing a file transfer. The file can only go as fast as the processing limit of the single machine. When the processing power or interface speed of the specific machine is fully consumed, the transfer can’t go any faster, even when there is more bandwidth available on the network. This approach requires predicting compute resource requirements and utilizes a bigger, more expensive machine to address the challenge of accelerating large file transfers.

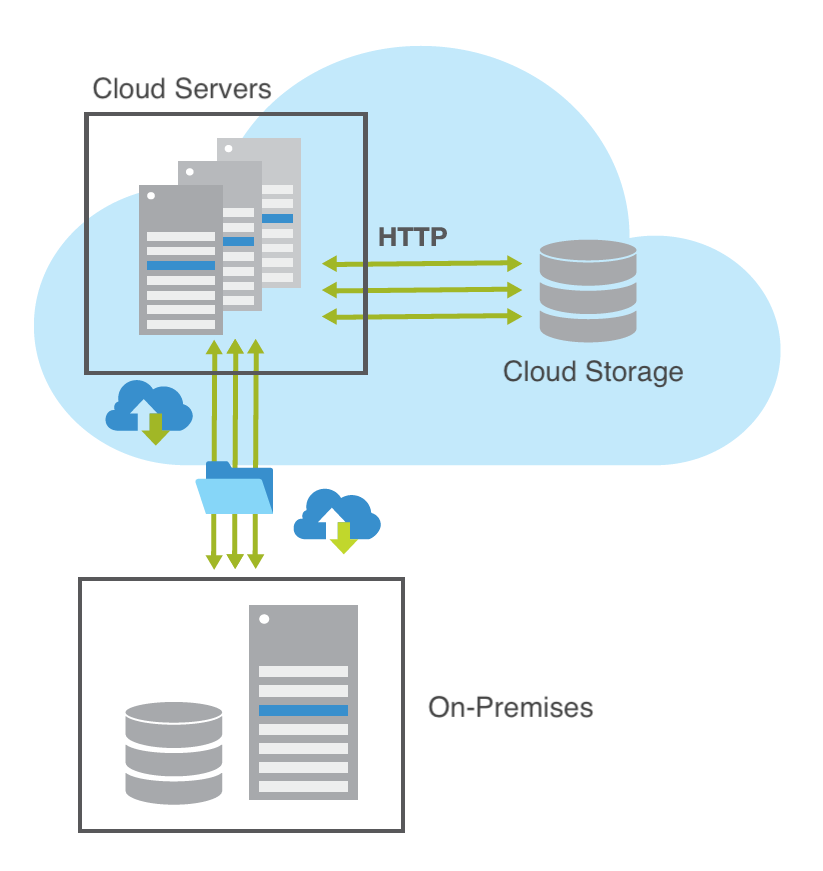

Scale Out Architecture for Accelerated Large File Transfer to the Cloud

Scale out architecture, on the other hand, solves the problem with a commodity computing approach, using an array of low-cost machines and scaling a single file transfer across multiple compute instances or nodes. By utilizing a large number of readily available computing components for parallel processing, you can obtain the greatest amount of useful computations for the least cost (Dorband et al, 2003). System architects recognized the efficiency of commodity computing even before cloud computing had developed.

One of the first real advances in computer processing speed was introduced in 1980’s, after an MIT graduate student recognized the “scaling out” advantages of many parallel processors over the huge, expensive and slow supercomputers of the day (Hillis, 1985). By processing data across thousands of computer processors working in tandem, like the brain’s neural network, they were able to smash speed records.

Since then, computer performance has risen sharply and costs have dropped to the point that most of us own at least one. And high-performance computing applications have increasingly adopted low-cost commodity systems for tasks that would have once required supercomputers.

The cloud, which is basically a massive cluster of servers and storage, is theoretically a perfect match for scale out architecture. However, cloud software has to be designed to take advantage of the cloud, allowing for efficient management and maintenance of data transfers across multiple nodes.

Signiant Flight’s patented architecture for data transfer is the only solution to date that scales out large file transfers to cloud services.

Resources:

Bondi, André B. (2000). Characteristics of scalability and their impact on performance. Proceedings of the second international workshop on Software and performance – WOSP ’00. p. 195.

Dorband, J. E.; Raytheon, J. P.; Ranawake, U. (2003). Commodity Computing Clusters at Goddard Space Flight Center, Journal of Space Communication.

Hill, Mark D. (1990). “What is scalability?”. ACM SIGARCH Computer Architecture News. 18 (4): 18.

Hillis, W. D. (1985). The Connection Machine, MIT Press. Cambridge, MA.